Tapomayukh Bhattacharjee, Cornell University

5/20/2021

Location: Zoom

Time: 2:40p.m.

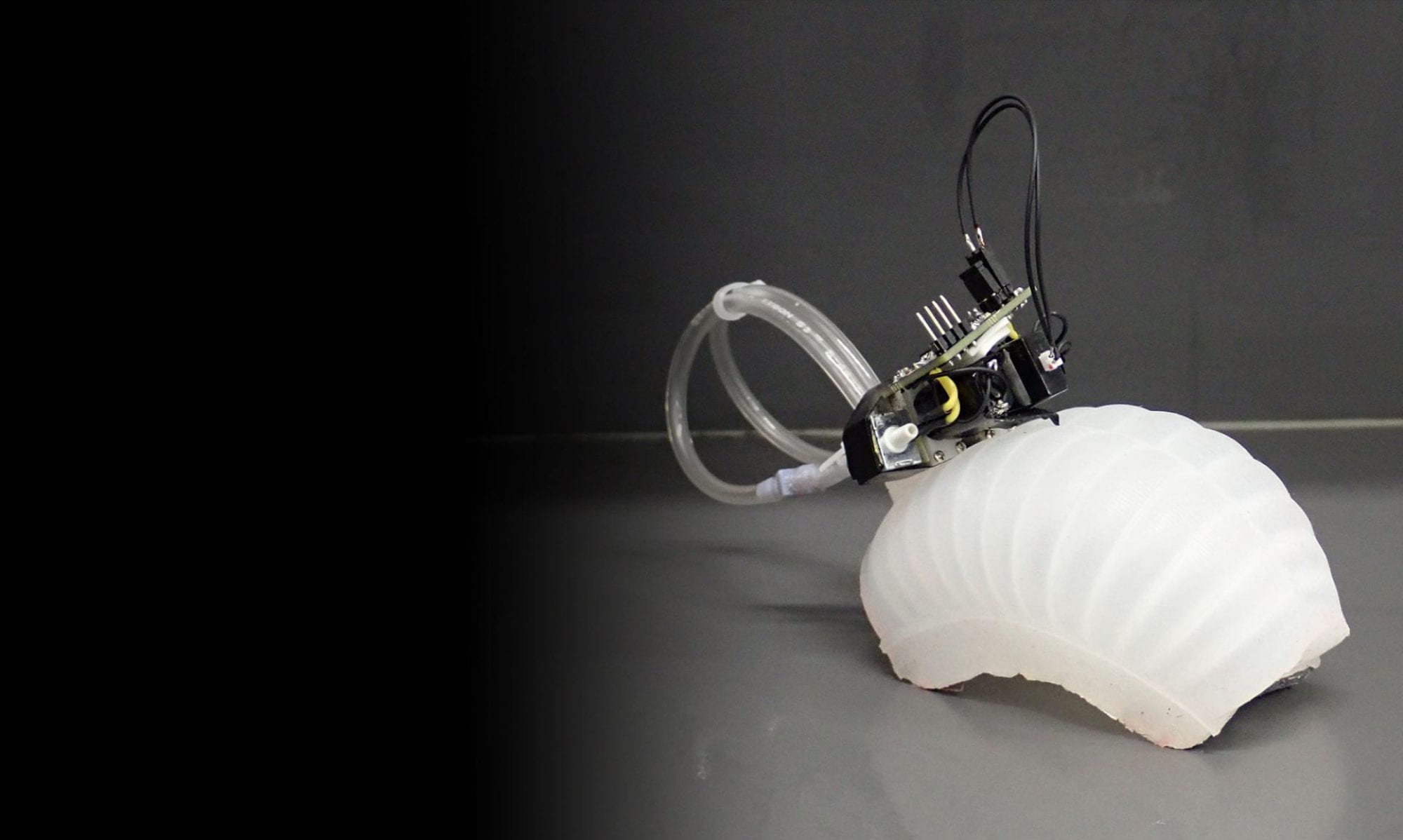

Abstract:How do we build robots that can assist people with mobility limitations with activities of daily living? To successfully perform these activities, a robot needs to be able to physically interact with humans and objects in unstructured human environments. In the first part of my talk, I will show how a robot can use multimodal sensing to infer properties of these physical interactions using data-driven methods and physics-based models. In the second part of the talk, I will show how a robot can leverage these properties to feed people with mobility limitations. Successful robot-assisted feeding depends on reliable bite acquisition of hard-to-model deformable food items and easy bite transfer. Using insights from human studies, I will showcase algorithms and technologies that leverage multiple sensing modalities to perceive varied food item properties and determine successful strategies for bite acquisition and transfer. Using feedback from all the stakeholders, I will show how we built an autonomous robot-assisted feeding system that uses these algorithms and technologies and deployed it in the real world that fed real users with mobility limitations. I will conclude the talk with some ideas for future work in my new lab at Cornell.